Automated lip reading software download

“It’s been shown that the accuracy of in-car voice activation degrades badly, with passengers, whether the radio is on and the age of vehicle.” He said that voice-activated cars degrade over time because the car starts to emit more engine noise, and lets in more road noise. “That’s a little bit down the line, but there are plenty of lucrative use cases that we’re addressing initially that require less vocab support.” “We want to develop a product that supports a large vocabulary of the 130,000 words in the English language, along with other languages, and will perform in real time,” McQuillan said. McQuillan tells me the scope of Liopa is still being determined. Liopa is the product of two academic researchers, Dr Darryl Stewart and Dr Fabian Campbell-West, joining forces with two proven commercial entrepreneurs, McQuillan and his colleague Richard McConnell. “We’re commercialising research that’s been done for the past 10 years at QUB on lip-reading technology,” said McQuillan. It helps in real-world noisy environments – for example, using a voice activation system in a car, or a virtual assistant in a restaurant, or outside. It works on any device with a standard camera, especially in situations where you can train the camera directly at the speaker’s face. The technology is agnostic to audio noise and, when combined with audio speech recognition, will improve accuracy of the overall system.

#Automated lip reading software download software#

Using an AI-based core, the software deciphers what the person is saying. Instead, Liopa’s technology analyses a video of the speaker’s lip movements. “These audio systems can be very accurate but the problem is that, when there’s background noise, the accuracy and usability degrades rapidly,” McQuillan said. Today, speech recognition is based on analysis of the speaker’s audio signal. Liopa founders, from left: Richard McConnell, Liam McQuillan, Dr Darryl Stewart and Dr Fabian Campbell-West.

McQuillan and his team have used machine learning to create a unique automated lip-reading application called Liopa. Higher processing capability through GPUs, more training data and sophisticated developments in Google DeepMind have made their impact. The introduction of machine-learning techniques has accounted for a huge leap forward in the technology. But it’s taken a long time to come to fruition, due to the intricacies of languages and the fact that no two people speak exactly alike. For the automotive industry alone, the voice recognition market is projected to be worth $3.9bn by 2025.įor decades now, computer scientists have championed voice as being the holy grail of the human-computer interface. “Meanwhile, voice activation is becoming more popular in cars,” said Liopa’s co-founder, Liam McQuillan. Voice applications are all the rage right now, with Alexa, Siri, Cortana and Google Assistant seeing an upsurge of new users. The paper also provides an essential survey of the research area.TechWatch editor Emily McDaid hears from the team behind Liopa, which has created an app that can read lips. All the contributions serve to enhance the accuracy of lip reading sentences.

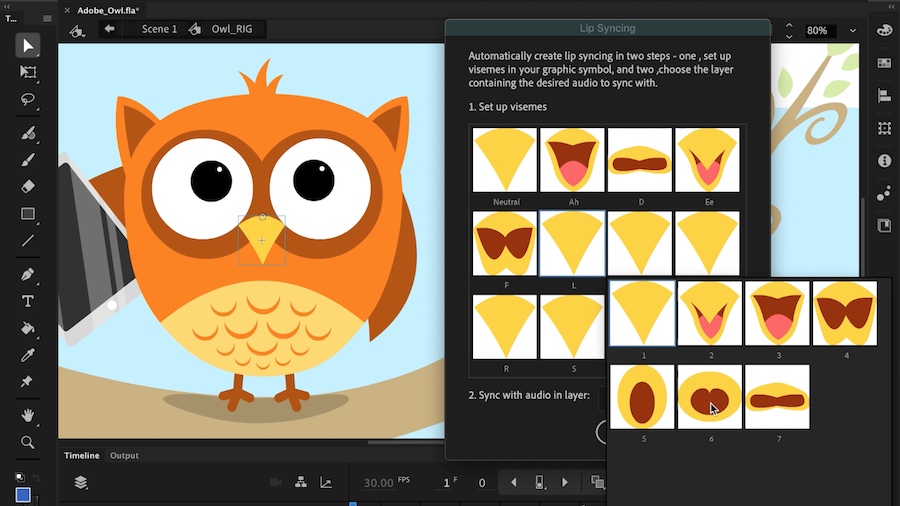

The main contributions of this paper are: 1) The classification of visemes in continuous speech using a specially designed transformer with a unique topology 2) The use of visemes as a classification schema for lip reading sentences and 3) The conversion of visemes to words using perplexity analysis. Compared with the state-of-the-art works in lip reading sentences, the system has achieved a significantly improved performance with 15% lower word error rate.

Experiments with videos of varying illumination have shown that the proposed model has a good robustness to varying levels of lighting. The system has been testified on the challenging BBC Lip Reading Sentences 2 (LRS2) benchmark dataset. With only a limited number of visemes as classes to recognise, the system is designed to lip read sentences covering a wide range of vocabulary and to recognise words that may not be included in system training. The system is lexicon-free and uses purely visual cues.

In this paper, a neural network-based lip reading system is proposed.